You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

The Communication Cube

- Thread starter Jourdy288

- Start date

JDTAY

Half Pepperoni, All Cheese

Hey guys, just a heads-up, Iago the Aflac Duck is dead.

So use a blender. The tricky part is converting your bitcoin to fiat and vice versa.As soon as someone links your RL identity to your public addresses they can see your entire Bitcoin history

I don't know if bitcoin is a pyramid scheme, but if you look at the market capitalization compared to other asset classes it's already pretty fat. We will never see returns like we did in the past. It's not going to make random people filthy rich via buy-and-hold strategies. HFT is another story.

TBH i don't really see a use for bitcoin other than as an alternative market or risky asset for your portfolio. I used to think it was cool as a decentralised financial system with a baked in monetary policy but I don't anymore. I hate mining it's stupid - brute force computing. What bitcoin did prove is that digital signatures are reliable for proving ownership so that's all we really need and can delete our blockchains.

The other thing that similarly sucks up all available processing power is deep learning. I think deep learning is dumb and we (humanity) should be ashamed that we can't come up with something better (closed form solutions basically). We have literally run out of ideas. Tech boom is over. Over and out.

Which is good. Like with most booms, it's better when it's over. We just have to stop pretending it isn't over and get to work in building quality, honesty and stability.Tech boom is over. Over and out.

λ the β-Redex Reducer

Hardcore Member

- Joined

- Sep 13, 2016

- Messages

- 1,566

- Age

- 56

Bitcoin is the pioneer, though. We now have other mechanisms which are more economical with energy than PoW and there are cryptocurrencies such as Ethereum Classic which have much more capabilities than Bitcoin does. I think that technologically Bitcoin is pretty lame compared to it's alternatives but I respect Bitcoin for being the pioneer. The pioneer is always inferior to whatever is built upon the lessons it taught the world.TBH i don't really see a use for bitcoin other than as an alternative market or risky asset for your portfolio. I used to think it was cool as a decentralised financial system with a baked in monetary policy but I don't anymore. I hate mining it's stupid - brute force computing. What bitcoin did prove is that digital signatures are reliable for proving ownership so that's all we really need and can delete our blockchains.

People who stepped aboard the Bitcoin train do not need another ride.We will never see returns like we did in the past. It's not going to make random people filthy rich via buy-and-hold strategies.

JDTAY

Half Pepperoni, All Cheese

Oh man, we haven’t had any discussion of how Elon Musk wants to buy 100% of Twitter and replace it with a solid gold antimatter statue of Elon Musk. A shakeup this big might actually get me to use Twitter.

Between the lines: The number $54.20 is notable as Musk likes to make reference to "420" - a well known time in cannabis culture. His infamous tweet to take Tesla private (which ran him afoul with the SEC) was for $420 per share.

Null

Snug

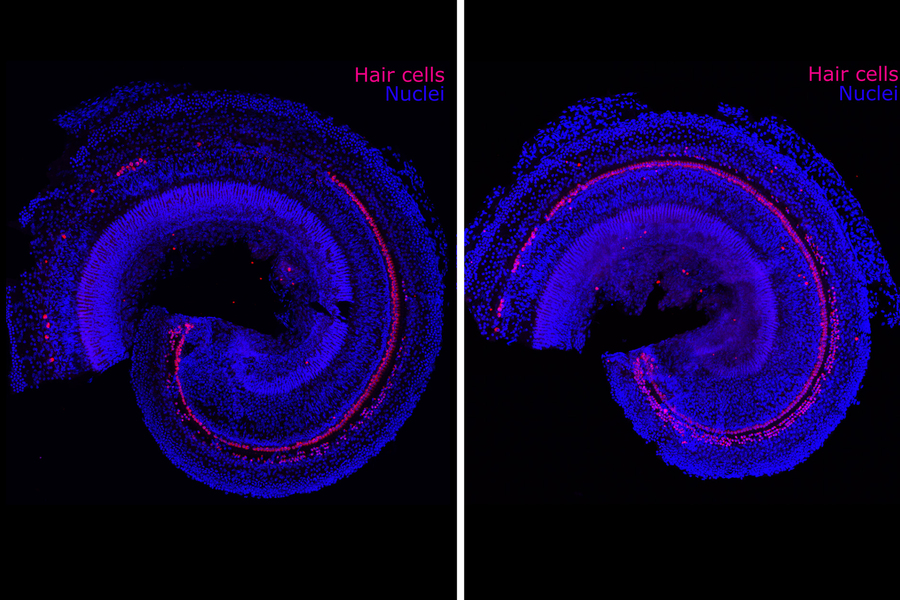

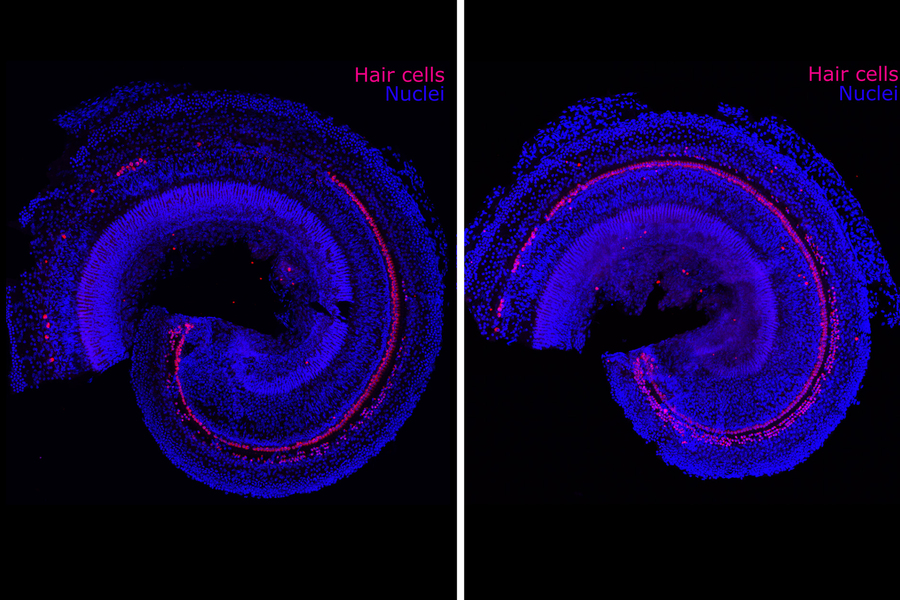

MIT Scientists Develop New Regenerative Drug That Reverses Hearing Loss

MIT spinout Frequency Therapeutics’ drug candidate stimulates the growth of hair cells in the inner ear. The biotechnology company Frequency Therapeutics is seeking to reverse hearing loss — not with hearing aids or implants, but with a new kind of regenerative therapy. The company uses small mol

web.archive.org

I will come to hell for sure: I just watched „the Live of Brian“ although the Netflix Version was only English, and the Scenes whit Pilatus who had this speach issues ditnt worked for me that good , in German, he said something like „werft den Purschen zu Pohden“ but as im not a native English speaker , i can’t tell what’s that funny how he speach ..

Null

Snug

Ironmouse Opens the Kamen Rider Belt on Stream

Source: https://youtu.be/-tbDfV0VqdM?t=16137 (04:28:57) Official links below Ironmouse: https://www.twitch.tv/ironmouse https://www.youtube.com/ironmouseparty https://twitter.com/Ironmouse https://www.instagram.com/ironmouseparty https://www.patreon.com/ironmouse...

That's the thing. Once it's too big, there will be powers that try to keep from collapsing. Personally I think that once the Central Bank has their tokens ready (another type of digital coin), then climate change policies will prevent mining by making it illegal; while maybe still allowing existing bitcoins to be exchanged to these tokens.Could be. When do you expect the pyramid to collapse?

I disagree. Deep-learned matrices can be sold, and keep being useful. Examples: People that apply for a loan fill in a form, and there's a matrix that is used that detects people that are not going to pay back. This data is then given to a human, that will decide if the loan is granted, or not. Or more close to home, Having this door that only allows your cat in, but not other cats by using a Computer Vision Matrix that has learned to detect your cat. Of course, the door problem can also be solved by using an RFID in the necklace of the cat.The other thing that similarly sucks up all available processing power is deep learning.

Deep learning - Wikipedia

I agree on that Deep Learning is not a panacea. But Imagine cars getting better and better at driving because all the feedback they give each other. And then humans are too afraid to drive because the machines just drive bumper to bumper because that's more efficient use of the road.

So you can keep going to concerts and enjoy the music next to the giant speakers. Still, you will lose your earrings because MIT did nothing to solve that. And asking around for "Hey, have you seen Golden Earrings" gives so many false positives... everybody has seen them, but I can't find them.

MIT Scientists Develop New Regenerative Drug That Reverses Hearing Loss

MIT spinout Frequency Therapeutics’ drug candidate stimulates the growth of hair cells in the inner ear. The biotechnology company Frequency Therapeutics is seeking to reverse hearing loss — not with hearing aids or implants, but with a new kind of regenerative therapy. The company uses small molweb.archive.org

This Night, i had a Dream: I was on some sort of an Shool Class Tour whit an Airplane to a "Party Island", i had the Pyra and my EDC Gear whit me in my Backpack..

Then the Plane went down on the Sea, and wantet to make the same as the Russian Flag Ship.. so i wantet to rescue my Backpack as not only would a new Pyra cost me 2 Months (tm) to get, but also because i ditnt saved the ROMS on a second Drive then the Pyra 2 SD Card ( i also have them on Pyra one but Pyra one was also in the Pyra..)

And what happened today? My Pyra 2 SD Card got corrupted when i tryed to put some stuff on it using the Phytium PC, maybe i ditnt push on "Save Unmount" )..

So i copyed over the whole Roms from Pyra 1 to my SSD, formatet the SD from the Switch Lite to "EXT4", so it want make any issues, and put everything back to the new Pyra2 SD Card.. , unfortunally my Savegames are now gone, but fortunally i ditnt had that many..

I also lost the BIOS Files for Dreamcast and Playstation, but i found new one in the Internet..

Oh and as i also lost the Rom Folders on the old Pyra2, i have now also every Rom in one Folder per System...

The GTA 3 and Vice City Savegames are also gone, but at least they where download from the Internet..

I think everything works now, from Atari 2600 to the SNES, only C64 got its issue back that its moans for its Printer etc, ...

Then the Plane went down on the Sea, and wantet to make the same as the Russian Flag Ship.. so i wantet to rescue my Backpack as not only would a new Pyra cost me 2 Months (tm) to get, but also because i ditnt saved the ROMS on a second Drive then the Pyra 2 SD Card ( i also have them on Pyra one but Pyra one was also in the Pyra..)

And what happened today? My Pyra 2 SD Card got corrupted when i tryed to put some stuff on it using the Phytium PC, maybe i ditnt push on "Save Unmount" )..

So i copyed over the whole Roms from Pyra 1 to my SSD, formatet the SD from the Switch Lite to "EXT4", so it want make any issues, and put everything back to the new Pyra2 SD Card.. , unfortunally my Savegames are now gone, but fortunally i ditnt had that many..

I also lost the BIOS Files for Dreamcast and Playstation, but i found new one in the Internet..

Oh and as i also lost the Rom Folders on the old Pyra2, i have now also every Rom in one Folder per System...

The GTA 3 and Vice City Savegames are also gone, but at least they where download from the Internet..

I think everything works now, from Atari 2600 to the SNES, only C64 got its issue back that its moans for its Printer etc, ...

Null

Snug

So you can keep going to concerts and enjoy the music next to the giant speakers.

I thought that already happened ? Mining was banned in some jurisdictions and then miners migrated en masse somewhere else, then causing mining to be banned there to prevent blackouts... It's not banned everywhere yet, of course, and some countries have even adopted cryptocurrencies. I don't know, too big to fail sounds too contradictory with anarchist utopias to apply. What do I know ?That's the thing. Once it's too big, there will be powers that try to keep from collapsing. Personally I think that once the Central Bank has their tokens ready (another type of digital coin), then climate change policies will prevent mining by making it illegal; while maybe still allowing existing bitcoins to be exchanged to these tokens.

I guess I don't need to tell you about Dutch child care programs, do I ? And there're issues on privacy in model matrices, which make them more difficult to sell that it might seem.I disagree. Deep-learned matrices can be sold, and keep being useful. Examples: People that apply for a loan fill in a form, and there's a matrix that is used that detects people that are not going to pay back. This data is then given to a human, that will decide if the loan is granted, or not.

Deep Learning is very fragile. It's not useless, it's just way oversold. And it's dangerous because then people get faulty systems and think they work. People love to believe in magic, and deep learning is intrinsically difficult to explain to users. So you get police patrols stopping driverless cars, mothers killing themselves because an automated system fined them for more money than they ever earned, or drugs being available without noone quite knowing why they work. I once even read a mathemathician complaining that nowadays with theorem solvers colleagues were applying results without properly checking all (nonsensical, but required) constraints and piling wrong results over wrong results from that. And if a mathematician can get it wrong, an engineer can be fooled, a marketing manager can be sure it's so great and an end user can't be arsed to understand what they're using. And someone gets to pick the pieces.I agree on that Deep Learning is not a panacea. But Imagine cars getting better and better at driving because all the feedback they give each other. And then humans are too afraid to drive because the machines just drive bumper to bumper because that's more efficient use of the road.

So you can keep going to concerts and enjoy the music next to the giant speakers. Still, you will lose your earrings because MIT did nothing to solve that. And asking around for "Hey, have you seen Golden Earrings" gives so many false positives... everybody has seen them, but I can't find them.

Null

Snug

Onlive Traveler: AVATARA Documentary by 536 Productions (2003)

AVATARA, a documentary about Onlive Traveler and its inhabitants (made in 2003) by 536 Productions of Vancouver, BC, Canada. Onlive Traveler was released in 1996. The description of this video explains how to join and see it for yourself. http://www.youtube.com/watch?v=9J1slS6QYe0 This user...

Null

Snug

GNU Health - News: The Free Software community mourns the loss of Pedro Francisco (MasGNULinux) [Savannah]

Savannah is a central point for development, distribution and maintenance of free software, both GNU and non-GNU.

Regression analysis to the umpteenth degree. My father has never used a computer and gets this.intrinsically difficult to explain to users

Imagine you are a trading company that has a stock trading algorithm (bot) that uses reinforcement learning to continuously improve it's performance. It's making good money but you can't understand exactly how it works so it makes you nervous. Now imagine it starts making more money than all your other algorithms. You internalize your nervousness and use it exclusively. Now imagine it's not imaginary and happening all around us.

You can only explain it because you have oversimplified the problem. You invest only looking at profits (and in some timeframe too). Deep learning is applied to everything from art to justice, handwaving its implications away.Regression analysis to the umpteenth degree. My father has never used a computer and gets this.

Imagine you are a trading company that has a stock trading algorithm (bot) that uses reinforcement learning to continuously improve it's performance. It's making good money but you can't understand exactly how it works so it makes you nervous. Now imagine it starts making more money than all your other algorithms. You internalize your nervousness and use it exclusively. Now imagine it's not imaginary and happening all around us.

Look at this other scenario:

In a language course the teacher looks for any subject that help people talk, even if somewhat polemic. One day the teacher brings up the subject of self driving cars.

One of the students claims to be a test pilot for new prototype cars (at a mainstream car company trying to catch up with market disturbers), testing cars intended for mass market, just before they're there so that they may still be modified. He pretends to know, but he's apparently no engineer.

The teacher says how should a self driving car behave when the cars ha to decide whether to hit an old man or a child in a critical situation. Meaning letting philosophical questions to machines is troublesome.

The student says he's guessing it would hit the child, since it's less pixels on cameras, so hitting a smaller obstacle would seem safer.

The rest of the class is flabbergasted that someone might construe self-driving to just crashing to the smaller object whenever one can't avoid the crash at all.

Nobody is too worried whether the self proclaimed test pilot is right. The problem is they realize they hadn't even though what the problem was, they were simply assuming someone had solved it. Well, maybe someone has solved a problem and has called it the same as someone else. But the problem is that those claiming to have solved it probably can't say what would the car do in that scenario. They could say something like "well, it's trained from so many hours of coverage form real people driving cars, so it would most likely do what most people do in that situation.". But nobody knows whether a particular situation has happened in the coverage, whether it has been overriden by different situations, whether the real situation will or will not match... It's just some statistic "we've had fewer accidents than human driven cars". So what? Humans are assumed a right to exist and take decisions, which one can afterward judge and punish if found wrong. Machines don't even have a right to exist, let alone take decisions, so people assume the humans designing it are responsible and must be able to explain the design decisions. With deep learning the engineer just can't really explain the decisions embedded in the model, just how the training material was selected, the neural network topology, etc. Yes, but why did it hit the child ?

Similar threads

- Replies

- 71

- Views

- 36K

- Replies

- 104

- Views

- 30K

- Replies

- 72

- Views

- 19K